UPDATE December 2020

There is an improved and updated version of this article here:

https://seankearon.me/posts/2020/12/rebus-sagas-fsharp/

This post is about how to use Rebus from F# using DotNet Core. Code for the below is here.

What is Rebus?

Rebus is a free, open-source service bus written in .NET. Source code is in GitHub and, whilst it’s absolutely free to use, there are also professional support services provided by rebus.fm if you need them. It is very similar to other .NET service busses like NServiceBus (commercial) or MassTransit (free).

Why not Functions as a Service?

You can use something like Azure Functions or AWS Lambda and easily knock up a simple message-based architecture.

Azure Functions give you a lot of integration points and management tooling out of the box and you can install on your own box so you don’t even need to be tied to the cloud. They’ve even got durability and orchestration now.

It’s all super cool and could well be all you need for most scenarios. Services busses, like Rebus, NServiceBus or MassTransit have a whole lot of additional things that are not available out of the box with FaaS today, such as:

- Publish/Subscribe (with more than one subscriber!)

- Support for complex workflows (sagas)

- Can be used in anywhere: web apps, desktop apps, services, the cloud…

- Groovy stuff like deferred messages

That’s not a full list and I’m not saying FaaS > Service Bus or FaaS < Service Bus. My reasons for choosing Rebus here are:

- I’ve been using it for years and trust it.

- I’m already deploying a .NET Core Web API and want to host my bus in that.

A Service Bus Inside a .NET Core Web API?

Really? WTF??? I’ve been handling complex, long-running workflows inside an Azure Web App for a couple of years now (read my previous article here). That implementation is using Rebus with C# on .NET 4.x and has been running like a dream for years. As it’s in an Azure Web App, you can scale it up or down flicking a switch. I love it because it’s so simple!

So, given that I am writing a web API, I thought I’d use Rebus inside that. My API is written using F#, .NET Core and Giraffe. I’ve never seen anything about Rebus with F# before, but F# is just .NET and has excellent interoperability with C#.

By the way, I’m using F# because it’s expressive, concise and is a beautiful language for modelling domains. Read more from the venerable Scott Wlaschin here:

https://fsharpforfunandprofit.com/ddd/

https://fsharpforfunandprofit.com/posts/no-uml-diagrams/

Scott’s book, Domain Modelling Made Functional is also an excellent read. If you’re coming to F# from C# then I recommend Get Programming with F# by Isaac Abraham as well as Scott’s website.

Anyway, let’s get started!

Create .NET Core API with Giraffe

Instructions on how to set up a Giraffe app using dotnet new are in Giraffe’s docs here. The command line I used was: dotnet new giraffe –lang F# –V none.

This will give you a basic, functional .NET Core web API project. To that, add the following Rebus Nuget packages: Rebus & Rebus.ServiceProvider.

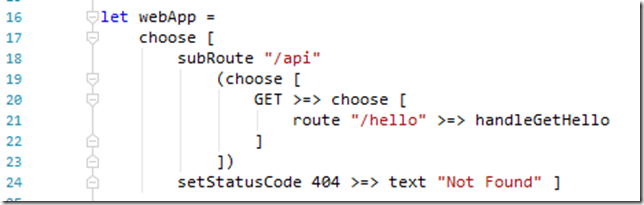

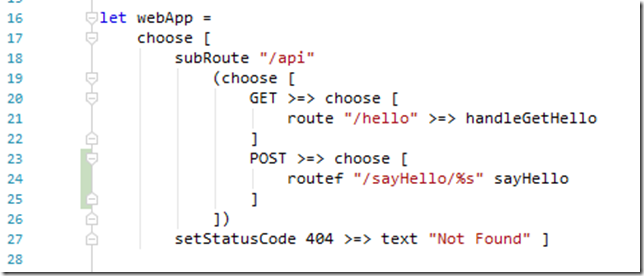

After that, you should be able to run your API and get a response from ~/api/hello. Here’s the routing configuration at this stage. Cool, eh?

Configure Rebus

We’re loosely following Rebus’ .NET Core configuration that you can find in their GitHub here. Instead of using Rebus’ in-memory transport as in the previous link, I’m going to use the file system transport. This is a great transport to use when hacking on Rebus as you can see your messages as files, and failed messages will be dropped into the “error queue”, which is a folder named “error”. By default, Rebus serialises messages to JSON with Base64 encoding of the message body, so they’re pretty easy to read. Error messages are added to the message as headers. Sweet!

Create a Message

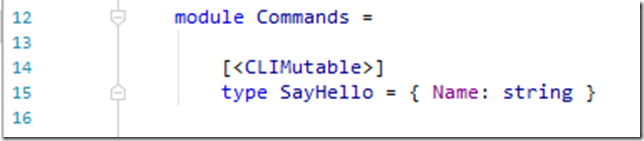

Firstly, we need a message that we will send to Rebus. In Rebus, messages are modelled using .NET classes. Our message is going to be a very simple command to tell the bus to say hello. Here is is:

So, this is an F# record type that holds a single string field called Name. The CLIMutable attribute tells the compiler to generate IL that’s more friendly for the .NET serialisers – read about that on Mark Seeman’s blog here.

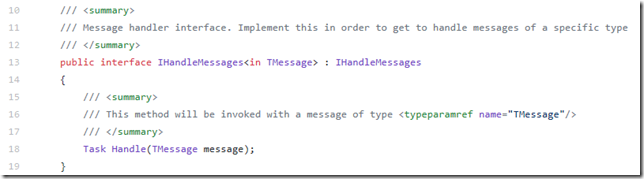

Create a Message Handler

Next, we need something to handle those messages. In Rebus, that’s a class that implements IHandleMessates<MyMessageType>, these are then registered into the bus. Rebus gives you fine-grained control over these are handled, but we only need a very simple handler. Here is what the interface looks like

Here’s what our F# implementation to handle our messages of type Commands.SayHello looks like:

This is a class in F# with a default constructor that implements the IHandleMessages<Commands.SayHello> interface. (A good place to start reading about classes is here.)

Rebus, being written in C# uses System.Threading.Tasks.Task for concurrency. F# has it’s own support for concurrency using Async workflow, and these are not the same things. You can, however, convert between the two models.

It’s worth noting that Giraffe works with Task/Task<T> and not F#’s Async. It does this to avoid having to always convert.

If you look at the code above, we are using F#’s Async and then piping that to RunAsRebusTask to convert back to a Task. Here’s how I’m doing that (using code from Veikko Eeva's comment here):

Configure and Start the Bus

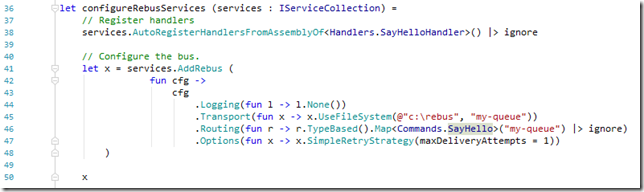

Lastly, we need to configure and start Rebus’ bus. We do that like this:

This is nearly identical to the C# equivalent. The two notable changes are when we pipe output to ignore, which is needed because only the last expression in a block can return a value in F#, and the use of the different F# lambda syntax fun x –> …..

By default, Rebus will retry handling a message 5 times before it fails. If you’re hacking on Rebus, you may want only one exception in your message. You can configure the number of retries like you see on line 47 (again, a slightly different syntax to what you’d use in C#)

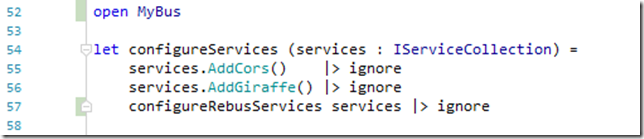

Then just hook that method up in your .NET Core service configuration as follows

Send a Message to the Bus

All good, we’ve got our Rebus bus running and waiting to handle messages. We just need to send it a message.

In my last article, I used a timer inside the web app to send a message. This was because I wanted to be able to leave it running for a day or so and come back to see that the bus had stayed running over that time. This time, I’m going to send a message to the bus as a result of a POST call to the API.

Set the Routing

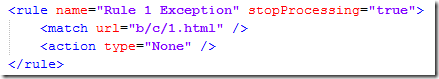

Change the routing section to add a POST call, like this:

Note that there is no “fish” between the route and the handler when you use routef.

Create an HTTP Handler

We’re using Giraffe’s routef function to configure a route that passes off to sayHello. The implementation of which looks like this:

This function receives the string parameter passed from the routing and returns an HttpHandler. You can see it uses task { … } starting on line 23, which is Giraffe’s Task implementation mentioned earlier.

Line 25 does the business and sends a new message to the bus. We then return OK with a message saying the world is good.

On line 24 you can see that it uses an extension method on the ctx parameter (which is of type HttpContext). This is how you retrieve registered services from inside your HTTP handlers. If you’re coming from a C# background you may be expecting to see some sort of dependency injection. Dependencies are more explicit in a functional language – here they come from being passed in as a parameter. Read more about dependency management in functional programming here. In an OO codebase, line 24 would be called a Service Locator and lots of people would get very hot and bothered if they saw that. Don’t worry, it’s fine. The world isn’t going to end and your codebase will stay nice and maintainable!

All Done – Run it!

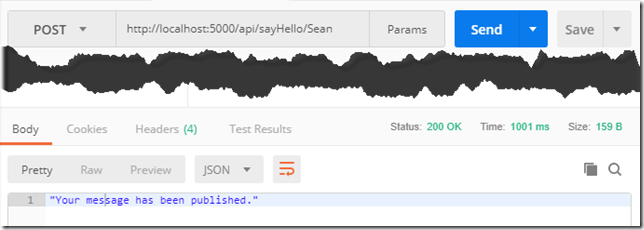

Run the project, and then post to our API and you get back a 200 OK with a nice message:

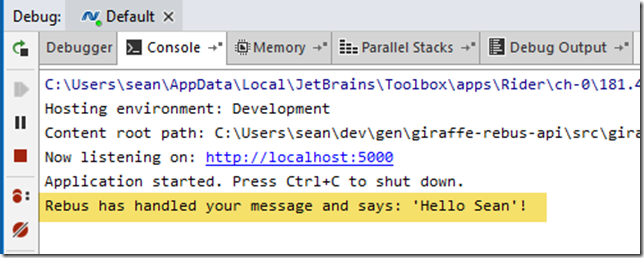

If you look in the console output from the app, you’ll see that our message has been handled by our message handler, which has printed out a nice message for us:

And there you have it – an F# implementation of a Rebus service bus running inside a Giraffe .NET Core Web API app!

Closing Thoughts

You can easily adapt the code above to run in other hosts, such as a console app, a service or a desktop app.

Remember that you’re not limited to using the file system to transport your messages.. Rebus provides a whole load of transports that you can use, ranging from RabbitMQ, Azure Storage Queues to databases like RavenDB, Postgres and SQL Server. You can see a list of what’s supported in .NET Core on this thread.

If you’re going to host in an Azure Web App, don’t forget to set the app to be “always-on”, as shown at the end of my previous article. You can also read a little about how to scale your bus in there too.

Code for the above is here.